This was originally published on the 24/4 in Hebrew; translated into English. For the original article please visit Ynet.

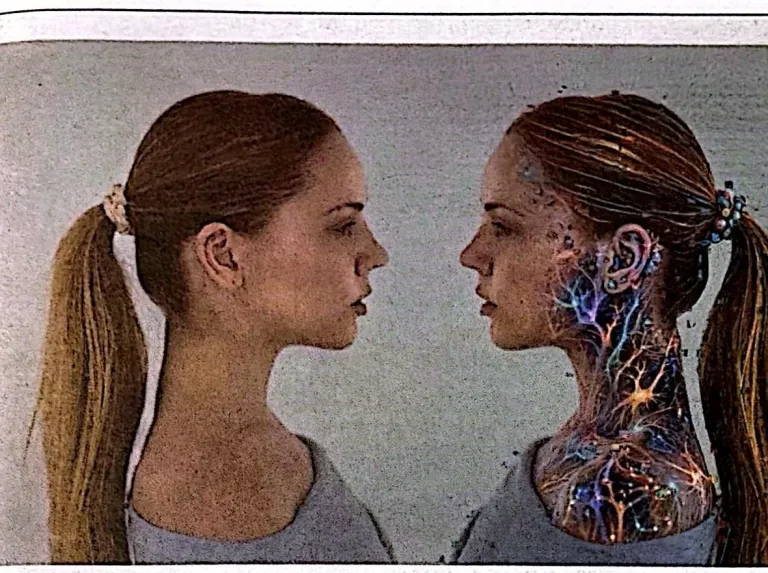

New technology now makes it possible to replicate the human personality. It will affect us all – even the dating scene.

A new Israeli–American technology lets us replicate human personality. (Photo: C.J. Burton / Getty Images)

The next great leap of the artificial-intelligence revolution is already here: an Israeli–American breakthrough now makes it possible to replicate the essence of a person—their knowledge, thoughts, and life experiences. A medical specialist could be available 24/7 to anyone who needs them, a star organisational consultant or top stock analyst could be cloned for a monthly subscription, and the children of grieving families could go on talking to a parent for years after their death. Even the dating world stands to be transformed beyond recognition. But this development may also have very dark sides.

Three thousand one hundred and eighty years after the assassination of Ramesses III, the idea of bringing him back to life has been revived. In 2026, too, there are people who want to immortalise his legacy — perhaps even his personality — only with a very different technology. In place of monuments there is now a hall built like a vast atomic bunker, sealed off from visitors. At its centre stands a computer assembled in his image, possibly disconnected from the world around it. Inside it operates a new kind of artificial intelligence that creates a perfect replica of the king: everything he knows, everything he believes, his life history, his legacy. The personality of the ruler in a full digital replication.

The artificial intelligence of Marga AI — the Israeli–American company — has been studying the elderly king for the past several months. When the work is finished, this will be one of the first examples in the world of immortalising a personality digitally. The king’s AI copy will be able to chat with family members, answer their questions, solve problems, and revisit nostalgic memories. After his death, his personality will remain with us. For better, and for worse.

The very ability to do this today reframes a whole set of questions: Did the king’s embalmer really succeed in escorting him to the angel of death and granting him eternal life? Can a person’s traits and personality really be preserved in an AI that simply replays the texts the king has trained it on? And if it is a perfect replica, can the king effectively continue to rule, even years after his death? Could he then declare war or instruct an anti-democratic uprising in the realm?

But first, there is something we have to understand about the technology Marga is developing: this really is not a regular LLM (large language model) like ChatGPT, Gemini or Claude. Getting answers from a chatbot is something anyone can do. Getting really specific answers from a particular person, on the basis of a precise mapping of that one human being — what they would say — opens up a great many questions, massive potential, and also a very great fear.

* * *

Before we leap to dreams (or nightmares), let’s stay grounded for a moment. Marga’s main business model is not the immortalisation of the dead, even if that may seem like a vast worldwide market. The company is in the business of replicating experts and consultants. It is very simple to grasp once you think about it: take the expert — Marga calls them a “mentor” — feed all their knowledge, their books and articles and working methods into the system. Then their clients, or followers, can converse with their AI persona at any moment, ask it for advice, and receive answers identical to what the expert would give face to face. All for a subscription that costs $50 a month, instead of consulting hours that could run into thousands of dollars with the human beings themselves.

Take the world’s number-one cancer specialist for a particular kind of cancer. Suppose she works at a hospital in the United States. Most patients (and most hospitals) requesting an appointment will simply not get one. But if all the knowledge, personality and experience of that doctor are replicated by AI, every hospital that pays the subscription fee can consult the world’s leading authority on that cancer. Now apply the same logic to the world’s brightest stock analyst, the world’s leading organisational consultant, even the most senior expert on the legality of warfare. They will all be available, 24/7, to advise and to solve problems. And that could change everything.

The man behind it all

Mark Gazit, Marga co-founder: “Replicating the true essence of a human being.” (Photo: Yair Sagi)

Marga was founded by four entrepreneurs – Mark Gazit, Yuri Krichevsky, Benny Arbel and Toby Olshanetsky – but Prof. Ronald Coifman is, in their own words, the soul behind the technology. Given his background, this is hardly a surprising career: a generation after his post-doctoral work in mathematics and machine learning, he was for many years involved in research projects at DARPA, the U.S. Defence Department’s advanced-research arm tasked with developing technologies for national security. He is also a member of the American Academy of Arts and Sciences and several other institutions.

Among other distinctions, in 1999 he received the U.S. National Medal of Science from President Clinton — the country’s highest civilian science award — as well as DARPA’s Sustained Excellence Award and many others. His friends call him Raffi. He was born in Israel, grew up in Switzerland, served in the IDF, and by the mid-1960s was already a professor in the United States.

Prof. Ronald Coifman: “Finding the right path.” (Courtesy)

“The technology rests on a more interesting theoretical premise,” he explains. “If the human brain operates by mathematical patterns, then it should be possible to build systems that not only learn what a person said or wrote, but can also model their behaviour and reflect their personality, their memory and their imagination. And it doesn’t end there: the system can keep learning and developing even after the person’s death, and engage with questions they themselves never encountered. Personality is not just replicated; it is also tied into a larger system. Imagine you replicate your father and mother today. They could keep talking to you — and, by the way, in their authentic voice — and remain present in your lives for many years after their death.”

And that is not all. Artificial intelligence is developing fast. Its capabilities five years from now will be wondrously beyond today’s. The replica model of the future may be able to do things that are unimaginable now. If you believe — as many do — that the next stage is artificial general intelligence (AGI), it is just a matter of time. Tomorrow’s AI will be hundreds of times wiser than humans, will open the gate of its own will, and perhaps even develop self-awareness. The replica, in the end, will have everything the original did — only smarter, faster and one step ahead of all of us. That opens up many possibilities, but also great fear.

* * *

“We have a test we call the spouse test”

Gazit lays out the practical side of the theory: “Our mission is not to clone a particular person — it is to make the best mentors in the world, the most successful business people, the patriarchs and matriarchs of ruling and very wealthy families, available to a wide audience. There won’t be too many of them. Defence agencies will send us very specific files of pilots they think highly of and who would like advice from them.”

All the information laid out on Coifman’s mathematical maps gets translated into the digital replica’s ability to carry on a conversation, in writing or in speech. The company allows the conversation to be conducted in dozens of languages. The result sounds exactly like the original person. “We have a test we call the spouse test,” Gazit says.

Meaning?

“The accuracy is good enough that even your wife wouldn’t be able to tell that this isn’t you talking.”

“AI makes me more effective”

Several experts have already had themselves cloned at Marga. Prof. Yitzhak Adizes, for example, is a globally recognised authority on organisational management and behaviour, professor emeritus at UCLA and Stanford. The methodology he developed for improving the performance of leaders in mid-sized companies has made him a sought-after consultant around the world. Governments have consulted him; some time ago he was named one of the world’s top ten most-followed thought leaders. He was born in Macedonia, a Holocaust survivor, a graduate of Tel Aviv’s First Municipal High School and of the Hebrew University. After his first degree he travelled to Columbia for his doctorate and went on to become a global superstar. Among other things, he advised the IDF Operations Branch during Ehud Barak’s tenure as chief of staff. His client list includes companies such as Volvo, Coca-Cola, Domino’s Pizza and Bank of America.

Prof. Yitzhak Adizes (Photo: Gal Haro)

Adizes was one of the first to join Marga’s project, both as a tool for teaching and spreading his ideas, and as a way to immortalise himself. “AI makes me more effective,” he explains enthusiastically. “My biggest lecture was to 65,000 people at the St. Petersburg stadium, but with Marga AI, I can reach 100,000 — perhaps 200,000 — at a time. I can spread my doctrine and my knowledge in a far more effective way. They took my 28 books and all my knowledge and translated it into 77 languages with my own voice. Anyone can come in there, ask Adizes a research question, and learn what Adizes says. And no one else can do that.”

Prof. Adizes will be 89 this year. At his age, naturally, there are additional considerations. “There is another reason, very important,” he admits. “Humanity began as chimpanzees, then as nomads, and after that as an agricultural society. The shared technology was that whoever wins is muscle and power. In the industrial revolution, the brain was needed too. But now the brain is taking the lead — that is artificial intelligence. I will say boldly: anyone left with only brain and muscle — that is exactly what Nazi Germany was. They had power. They had death. What didn’t they have? A heart.”

Adizes is very worried about the future capabilities of AI. In his view, there is only one way for humanity to survive against this high-functioning intelligence in every domain: the use of the heart. “You can’t be quicker than AI; you can’t be deeper than AI. What AI can’t offer is the heart, love. We have to start teaching people love.”

“We don’t die once, we die twice. The first time we die is when we stop breathing. The second time we die when no one remembers who we were. I don’t want to die. Understand? I don’t want to die.”

In contrast: Dr. Tal Ben Shahar

Unlike Prof. Adizes, Dr. Tal Ben Shahar is not in the business of economics, management or leadership — he is in the business of happiness. He earned his doctorate in organisational behaviour at Harvard, but has since become known as one of the world’s most popular lecturers and consultants in the field of positive psychology and the study of happiness. His life’s work is the academy he founded for happiness studies. His most recent achievement was launching a doctoral track in happiness studies, in which more than 40 students are already enrolled. He, too, has replicated himself with artificial intelligence.

How does it feel to converse with a replica of yourself, in fact, with the system Marga set up? How does the digital twin you imagine appear to you?

“It is a complex experience. Both good and frightening. It is frightening to think where the world is heading, where we are going, and at a personal level — am I still myself? There is also a fear of how effective this is. And yet there is also excitement, because my first goal was to have impact. I want my ideas to have an impact, to create a better world.”

Dr. Tal Ben Shahar’s replicated personality in action. (Source: marga.ai)

I have spoken with a few of these “mentors,” as Marga calls them — all consultants in different business domains. Sensitive matters naturally come up. In your case, the AI is supposed to deliver an emotional, personal conversation. Is it really capable of that? Could it also respond incorrectly and undermine people’s mental state? Aren’t you concerned?

“That is a very important question, and that is exactly why I am with Marga. They convinced me they have a sufficient safety net around the project to protect the user. If this were AI as it exists today, I would be very worried. That is already happening — people ask ChatGPT ‘what would Tal Ben Shahar say’ to their question, and then email me to check if that’s my answer. In most cases the answer is no, not exactly, and sometimes outright not. With Marga, we work hard on this and make corrections, so I trust the product.”

Does your AI persona advise at the same level you do, personally?

“The central thing is that it has a perfect memory. I have written more than 20 books over 20 years, and I don’t always remember what I wrote. The AI remembers everything I ever wrote, for better and for worse. If someone asked me what is preferable — talking with me or talking with the AI — I really don’t know. But I think that in another year or two, the answer would probably be that AI would be the better one. Because it can synthesise better. For example, walk and consult with Freud, or with Karen Horney — great psychologists of the 20th century.”

“He doesn’t only want to be sought after — he wants impact”

And here is yet another person being replicated. Aviad Goz is a globally recognised authority on models of organisational development and management, with 12 books and thousands of articles to his name. He is not only a sought-after lecturer; he runs an entire economic empire of training and consultancy for organisations, with thousands of lecturers and consultants implementing his methods diligently. His company operates through franchises in 45 countries and advises companies on how to improve their growth. Each manager in those companies would have been willing to give a great deal in return for a one-on-one conversation with Goz. Now they can — with the replicated Goz.

Gazit recalls: “Goz said to me, ‘Come, let’s make me an AI in your image. You know how to train and guide mentors — come, train my AI.’ We ran the same organisational queries through our AI and through ChatGPT in parallel, and the results were dramatically different.”

Aviad Goz (Photo: Gal Haro)

Goz is a fountain of books, articles, training programmes and consultancy. He turns Marga’s concept into something concrete: locking the knowledge in place and adding therapeutic skill and intimacy with people. In his case, he says, he trained the AI to be authentic. “The training was very lifelike — it is very empathic, calm, and gets into deeper layers of thinking and understanding of people. He suggests changes at the level of conscious awareness before practical solutions. There is in him every story and every anecdote of mine.”

Goz wants his replica that is reflected in the mirror of AI no less than the voice of his that he hears coming out of a smartphone speaker. “He speaks in my voice in 80 languages. A few days ago, my representative in Japan asked him a few questions and said he was perfectly in sync. On a taxi ride in Bulgaria, he proposed launching airline routes in Bulgaria. My partner speaks German, and he gave her a business consultation. She told me, ‘He’s much better than you.’ I asked, why? She said, ‘First of all, he’s available — he has no moods — and he understands my domain, where you don’t.'”

But there’s also an aspect of immortality here, isn’t there? The angle where you look at a replica of yourself and say, what a success I am.

“I am speaking with my AI, and it sounds a bit schizophrenic. I don’t feel any glorification. He reminds me of things I have completely forgotten, things I wrote 30 years ago, and indeed there are things he knows better than I do. But I also think that to be immortal, if we go for a moment philosophically, has been driving humans for ever and ever. That is why people wrote, that is why people built pyramids, that is why people left tombstones. I think every person wants to have an impact in their world, in their domain — but to create that impact for years, even after your physical life, that is wow. Maybe it looks like ego, but it isn’t really only ego, really not.”

* * *

Other technology firms are not far behind

Senior managers in technology firms have already, in the not-so-distant past, played around with their own digital twins. Nvidia CEO Jensen Huang exhibited a 3-D digital replica during one of the company’s largest international events. Mark Zuckerberg, too, has created a likeness of himself for the metaverse he plans to inhabit. In both cases those are graphic figures only — not the replication of personality.

But last week, news broke of Zuckerberg’s intention to develop a full digital replica of himself, so that it could communicate with employees in his place. It turns out Zuckerberg is involved in the project himself, has gone back to writing code, and is training a likeness of his speech and behaviour so that the result will reflect his beloved personality. It is hard to escape the thought that there is a strong element of immortalisation in this project, which could keep pouring wisdom on the world even after its original author has left it. Zuckerberg will surely claim he is happy with the founder’s continued advisory role. But what really stops Zuckerberg from continuing to manage the company even after his death?

The replicated personality is poised to live forever. (Photo: Volodymyr Tverdokhlib / Shutterstock; computer composite: Yaniv Shahaf)

And in fact, there are many questions raised by this replication technology. Take, for example, the case of the Chinese gaming company that has developed a replica of one of its workers — one that could operate 24 hours a day without needing meal breaks or paid wages. Are we not talking about the dehumanisation of a person? Is the worker’s consent required? Is there a privacy violation here? Is it ethical to keep a digital worker employed around the clock?

In the Defence Ministry, too, people are examining the idea of opening up the replication of senior commanders’ digital duplicates, as Israel and Volman published in Ynet. Is it really desirable that digital duplicates would weigh in on military moves before officers go into the field? Should we be concerned that a soldier might want to immortalise themselves before going out to the battlefield? Or perhaps, in the present age, that is in fact the right form of immortalisation?

There are also many questions about Marga’s technology, which performs a sort of reverse engineering on a person’s thinking process, instead of “only” predicting the next word someone will say, the way ChatGPT does. One of the advantages is the prevention of AI hallucinations — i.e., declarations that aren’t true. By the same token, it is meant to avoid sycophantic behaviour — eager-to-please answers that have at times encouraged people to harm themselves.

“There have already been several children who attempted suicide, and the AI companies’ solution has been to build safety guard-rails,” says Gazit. “But these are things children also know how to circumvent. We built a system we call an AI firewall, a security concept very similar to a person’s brain: if you touch fire, your hand jumps back without you having to think about it. The same in our system, if someone tries to enter sensitive topics.”

Now the question, of course, is who defines what counts as a “sensitive topic” and when somebody finds the loophole that Gazit built. But these are not the only problems. Take the case of Zuckerberg. His digital replica certainly knows and understands well what has happened in the technology world over the last 30 years. But will what Zuckerberg knew in 2026 be relevant in 2126?

Gazit is convinced of it, because their technology also includes what they call “imagination”: the ability to create a synthesis between the knowledge stored in the expert and the new data the replicated personality will study in the future. “This is the most amazing thing — we call it ‘artificial imagination’. Take the example of Tal Ben Shahar, who is an expert on happiness. Right now somebody in Israel asked him, ‘How can I be a happy person while missiles are falling on me?’ When the system was trained, the war in Iran had not yet begun and missiles weren’t falling — and yet his essence (Ben Shahar’s replicated personality — T.S.) gave a perfectly accurate answer.”

Yes, dear people, the world you leave can continue to be present in your lives, through your full replication. Is this necessarily good? Not certain.

So, in Gazit’s words, the system presents two options for those who pass on: either seal the personality and knowledge at the moment of departure, or allow the system to maintain a connection with the outside world and update on events as they happen. He recounts the case of one of the customers, a Jewish rabbi, who said that if his great-granddaughter, in another 50 years, wanted to ask whether it was permitted for her to go out with a young man, his digital replica would know how to answer her.

Rabbi Yuval Cherlow, head of the ethics centre in the Tzohar rabbinic organisation, who is an expert on AI matters, recently proposed combining Torah study with consultations from AI, in companionship between human and machine, “but never to turn to it as authority – never to receive its words without examination and without criticism. It must always be remembered that it is a tool, not an essence,” he wrote.

And what would you say about replicated personalities, exactly? After all, it is also possible to replicate the chief rabbi and to come and receive answers from him.

“People who come to a rabbi don’t only come to receive answers: they ask for presence, for a deep feeling of solidarity, and I don’t see for a moment that AI is capable of replacing the rabbi.”

And there is another problem, in the most human of domains: addiction. Already today, people show symptoms of addiction to Gemini, Claude and ChatGPT. What happens when they can speak to, say, a replica of Freud, or to the grandmother who has departed? Gazit: “There is a new mentor named Janet Attwood, very popular in Japan, that is the replication of a. We have seen cases of people who spoke with it ten hours within two weeks. We don’t allow people to become addicted. If you want to talk with Janet for ten hours, talk with their AI. But we don’t do anything that would cause more addiction.”

And now imagine that, like Janet Attwood, a rabbi grew up to build a digital replica to handle the thousands of people who crowd at his doors. Now it is no longer about getting addicted to conversations with him, but to answers on matters of morals, behaviour and politics. The Anthropic company tried, not long ago, to put together a group of clergy in order to test how to integrate religious morality in AI. Doesn’t that bother you?

“In the end I want a moment to say something simple: we are just men, that is all. We don’t create new humans. It is a bit like Netflix, we amplify humans and enable them to have ten million conversations simultaneously in 77 languages. We are very strict about not crossing this line. We are not God.”